Heavy Data

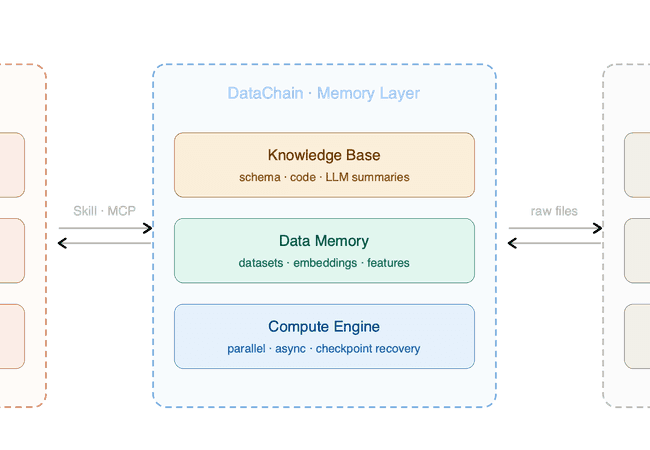

OpenAI's Data Agent and the S3 Gap

OpenAI built their in-house data agent for structured warehouse data, where schema, lineage, and queries come for free. Files in S3, GCS, or Azure - videos, sensor logs, image corpora, PDFs - have none of that, and the problems get a lot more interesting. Here is how we built the four foundations that close the gap.

The Neuro-Data Bottleneck: Why Neuro-AI Interfacing Breaks the Modern Data Stack

Neural data like EEG and MRI is never 'finished' - it's meant to be revisited as new ideas and methods emerge. Yet most teams are stuck in a multi-stage ETL nightmare. Here's why the modern data stack fails the brain.

Parquet Is Great for Tables, Terrible for Video - Here's Why

Parquet is great for tables, terrible for images and video. Here's why shoving heavy data into columnar formats is the wrong approach - and what we should build instead. Hint: it's not about the formats, it's about the metadata.

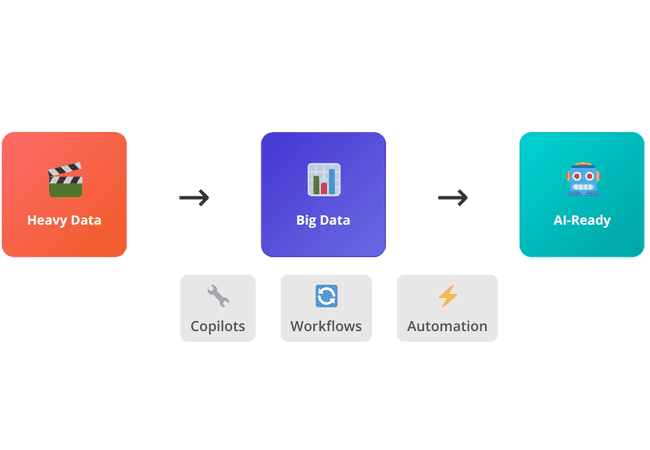

From Big Data to Heavy Data: Rethinking the AI Stack

LLMs can finally interpret unstructured video, audio, and documents — but they can't do it alone. This post introduces the concept of heavy data and explores how modern teams build multimodal pipelines to turn it into AI-ready data.