OpenAI's Data Agent and the S3 Gap

OpenAI's January post on their in-house data agent shows what a working stack looks like at 70,000 datasets and 600 PB - built for structured warehouse data, where schema, lineage, and a query surface come for free. For unstructured files in S3, GCS, or Azure - videos, sensor logs, image corpora, PDFs at petabyte scale - none of that exists by default, and the problems underneath are different and more interesting. This post walks through the four foundations we settled to fill the gap - schema, datasets, file references, lineage - plus the database in the middle that ties them together. We are sharing because we want to hear how others doing this think.

The Data Harness: where we are headed, once the foundation is settled

Code agents are real. The current harnesses (Claude Code, Cursor, Codex) are a different product class from a year ago: a recent r/LocalLLaMA experiment moved a 9B Qwen from 19% to 46% on the same benchmark just by switching scaffolds. The harness pulls as much weight as the weights.

For data, the picture flips. Frontier LLMs that score above 90% on Spider 1.0 collapse to 10-21% on Spider 2.0 with real enterprise schemas. Mike Stonebraker puts off-the-shelf SQL accuracy on real enterprise data at 0%, or 10% with RAG. That is structured data, with decades of warehouse infrastructure underneath.

Physical AI is taking off; neuroscience and multimodal medical imaging have breakthroughs queued behind. Each runs on data the current agent stack cannot reliably read. We have been building data infrastructure since DVC, which is to say a long time, and teams in those fields still pipe CSV exports by hand. If structured-SQL is at 0% or 21%, multimodal is not at the starting line.

OpenAI knows. When their internal team needed agents to work over 600 PB, they quietly built six layers of context infrastructure on top of everything - not because they ran out of side projects.

Their January post is the cleanest public articulation of what a data-agent stack looks like at scale. We have been seeing the same shape over files in S3, GCS, Azure: multimodal video, audio, image corpora, sensor logs, PDFs at petabyte scale. Several pieces of that stack rest on a foundation we do not have for free.

What this post is not about

Why does this matter for an agent writing code? Because the agent hits these problems on its first useful pipeline. Suppose it enriches 50,000 PDFs in your S3 bucket at roughly $5,000 and six hours. The failure modes start:

- Cold versus warm recall. Next session, if reading yesterday's enrichment costs the same as recomputing it, the agent recomputes - quietly, every session. The $5K becomes weekly.

- Checkpoint recovery. The pipeline fails two-thirds in. Without persisted compute state and a notion of an incomplete dataset (which databases were never built for), the next run starts from byte zero.

- Incremental updates. A week later, 1,200 new PDFs land. Without versioning and a dataset diff, "append" becomes "reprocess everything to be safe".

- Compiled summaries. Tomorrow's agent has no idea what is in your datasets. Without a precomputed page, it hallucinates columns or burns context scanning the bucket.

Each deserves its own post, and we will write them. But none of them works without four boring primitives in place first. This post is how we settled those - four questions warehouse stacks settled decades ago and we had to settle ourselves for files in object storage. Without them, neither the spicy stuff above nor the Physical AI, neuroscience, and multimodal medical imaging breakthroughs that depend on them get to happen.

Four questions we had to answer

In OpenAI's stack, code is the source of truth about data - their Layer 3 extracts grain and primary keys straight from pipeline SQL. We agree, with one wrinkle: code lives in Python, data lives in S3, GCS, or Azure as bytes, and binding the two is most of what follows. Each question below starts with a real person hitting a wall the warehouse world does not have.

What is a schema for a file?

An autonomy engineer has 800 hours of dashcam footage and synchronized sensor streams. The task: find every five-second clip where a pedestrian and a parked truck are within five meters and the vehicle is moving above 20 km/h. Before any of that - what is a "clip"? Is the "pedestrian present" annotation a bool, a confidence score, a bounding box, all three? What does it mean to filter on vehicle speed when speed is a 100 Hz sensor stream?

In a warehouse this question never comes up. The table has columns; the columns

have types; INFORMATION_SCHEMA answers in milliseconds. Schema is metadata you

read.

For files, schema is something you have to invent the language to express, and then produce by running heavy compute. There is no schema in the bytes of an MP4 until a model extracts one.

The obvious candidates fail. A SQL table schema cannot express a typed file reference, nested LLM-response objects, or the 1,000-column robotics records normal in physical AI work. JSON Schema, Avro, and Protobuf are description layers parallel to the code, drifting the moment work moves faster than maintenance - the same Gartner-documented failure that struck YAML semantic models in catalog tools.

The answer that held up: Pydantic. Schema is code. For the autonomy clip task above, the dataset's schema is one class:

from pydantic import BaseModel

from typing import Literal

import datachain as dc

class BoundingBox(BaseModel):

x: float

y: float

w: float

h: float

class Pedestrian(BaseModel):

box: BoundingBox

distance_m: float

pose: Literal["standing", "walking", "running"]

class ParkedVehicle(BaseModel):

box: BoundingBox

type: Literal["car", "truck", "bus"]

distance_m: float

class ClipAnnotation(BaseModel):

file: dc.File # video clip in object storage

duration_s: float

speed_kmh: list[float] # one sample per second

pedestrians: list[Pedestrian]

vehicles_parked: list[ParkedVehicle]

weather: Literal["clear", "rain", "fog", "snow", "night"]

vlm_summary: str | None # optional caption from a vision LLMOne row per clip. Nested objects, lists, and typed enums travel with the row;

the bytes of the clip stay in the bucket, addressed through the file: dc.File

field. A SQL schema cannot express any of this without three sidecar tables and

a JSON column for whatever does not fit.

Pydantic is also what every frontier LLM speaks natively for structured output - so the schema the pipeline declares is the schema the model fills. How each row points at the actual bytes, and how the collection becomes a unit picked up by name, is what the next two questions answer.

What is a file reference?

An ML engineer is working on a billion-image LAION-scale corpus. After running a quality model, 3,200 images pass her threshold. She does not want to copy them, or write a sidecar JSON that goes stale the first time someone moves the corpus. She wants a typed dataset where each row points at the original bytes and the code reading it next month knows exactly which.

In a warehouse this does not come up - the data is the row. For files, the bytes do not move; each row needs a typed pointer carrying storage location, size, ETag, last-modified time, and storage version. A path string, a URI, or a sidecar JSON each fail at least one of those.

The thing that works is a typed File class alongside the rest of the

columns. Storage location, path, size, ETag, last-modified, version - all baked

in. The bytes stay in the bucket. The file: dc.File field on the

ClipAnnotation schema is one of these, just a Pydantic class under the hood:

class File(BaseModel):

source: str # "s3://bucket-name", "gs://...", "azure://..."

path: str # "path/to/audio.mp3"

size: int # bytes

etag: str # storage version identifier

version: str # object storage version id, if enabled

last_modified: datetimeAnd because Pydantic classes compose, you do not need a new primitive for every

richer file-shaped object. An audio fragment is a File plus two timestamps:

class AudioFragment(BaseModel):

audio: File

start: float

end: float

transcript: str | NoneVideo clips, image patches, document chunks all follow the same pattern: a

File column plus whatever annotations the work needs. The bytes never move.

Schema binds types-in-code to columns-in-storage. File binds bytes-in-bucket

to a typed handle. The next question is what turns rows of typed columns and

file pointers into a unit a teammate can find by name and build on.

What is a dataset?

A neuroscience researcher has 300 GB of EEG and MRI recordings in a bucket. Two weeks ago a colleague ran an extraction pipeline producing a feature set on high-frequency oscillations. The researcher wants to point Claude Code at the result by name, say "extend this to the new MRI modality", and have the agent pick up the schema, file pointers, and prior conclusions without reconstructing anything from a notebook. We wrote earlier this year about this friction - the colleague-to-agent handoff is where the modern data stack fails first on neural data.

In a warehouse this is trivial: the colleague produced a table with a name and a schema, and the next person or agent reads it. For files, the unit is not given. A folder of CSVs has no schema in code or version chain. A Parquet file has no registry or automatic lineage. A warehouse table cannot carry Python types or capture the Python code that made it. None is something you can hand to an agent and say "build on this".

The answer: a typed, named, semver-versioned record collection. The Pydantic

schema from two questions ago, the File columns from the question just above,

a name a teammate or agent can resolve, and a version the system assigns:

dc.read_storage("s3://eeg-corpus/").map(extract).save("eeg_features")The save creates [email protected]; a second save creates 1.0.1. Customers

pushed us toward proper semver, plus namespaces (team_brain.prod.eeg_features,

like database.schema.table) so owner and environment are visible at a glance.

Anyone running dc.read_dataset("[email protected]") gets the artifact back,

schema included:

>>> dc.read_dataset("[email protected]").print_schema()

file: File@v1

source: str

path: str

size: int

etag: str

version: str

last_modified: datetime

subject_id: str

band: str

power: float

spike_count: intPointing Claude Code at the name is a working convention - the agent reads the schema, knows column types, knows where the bytes live, and writes the next pipeline against a settled premise.

This is where Python's dynamism becomes a design constraint. OpenAI's Layer 3 reads grain off pipeline SQL because SQL is static; Python is not. The safer move is to capture the schema at save time, when the runtime has resolved which Pydantic class came out and which records conformed. The save itself never overwrites - that rule came from the version where it did, and trust collapsed within a week. Immutability is not a preference; it is the only safe contract.

How do we track lineage?

A customer-support team lead has run an agent over a million-ticket archive. It flagged 47 for escalation. The manager asks: "Why those 47?" Nobody knows

- no record of which prompt, which model, which sub-pipeline, or what the agent expected. The classification exists; the chain of reasoning that produced it does not.

In a warehouse this barely exists as a question - query log, dbt graph, and version-controlled pipeline source give most of the answer. (Yes, there is a data-lineage industry layered on top, which is how we know it is not always enough; we politely set that aside.) For files, the bucket's access log has no semantics. Capture lineage at save, or it is gone.

A 12-year-old Sussman quote resurfaced last week after an AI agent deleted a production database, naming exactly the three properties we needed:

"I want it to tell me why it did the thing it did, what it thought was going to happen, and then what happened instead."

The answer is lineage as a structural side effect of save: parents, source code, author, and time recorded automatically. The Sussman properties land in three columns - source code (why), the Pydantic contract (what was expected), the rows and statistics (what happened). The team lead's question becomes answerable months later, not an interrogation of memory.

This is also why a dataset is more than data. A dataset is a policy on how it was created and read: rows, plus schema (contract), immutability and versioning (rules), source code and parents and author at save (provenance), typed columns and annotations (semantics). All five landing together at save as one immutable, versioned artifact.

A database in the middle, because there is no other way

Where does any of this actually live? Schemas, names, versions, lineage, typed columns, embeddings, features all need atomic transactions, schema enforcement, parent-edge graph traversal, and an index that lets a million-row filter run in under a second. Object storage has none of those; memory does not outlive the session; a flat catalog has no enforcement. The only thing that fits is a structured database in the middle - not because we love databases, but because humanity has been putting structured things in databases for fifty years for a reason.

The user, the schema, and the agent all speak Python though. So part of what had to be built is a transpiler from Pydantic-style chained operations to SQL and the typed result back to Pydantic objects:

(

dc.read_storage("s3://eeg-corpus/")

.group_by(dc.func.path.ext("file.path"))

.agg(

n_files=dc.func.count(),

total_bytes=dc.func.sum("file.size"),

)

.to_pandas()

)That chain runs against billions of rows on ClickHouse at LAION scale, or hundreds of millions on a single SQLite file when the team does not need a cluster. The researcher never sees SQL; the agent never writes SQL.

The bucket stays the source of truth for bytes. The database becomes the source of truth for the rest - names, versions, lineage, typed columns, embeddings, features, metadata. We have, in effect, reduced the unstructured-data problem to the structured-data problem - the same architectural job OpenAI gets from a warehouse, with a bucket underneath instead.

The Data Harness on top

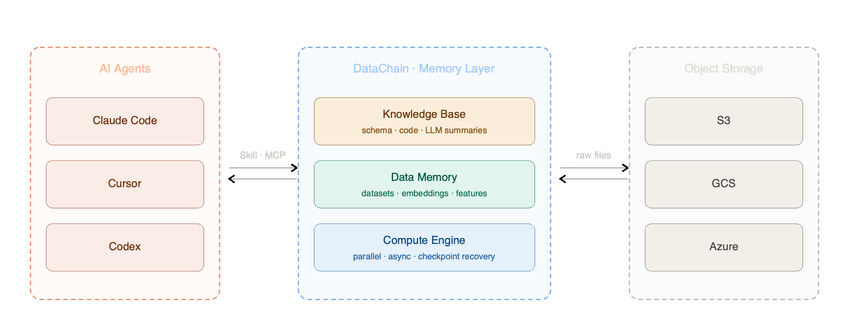

The four primitives and the database in the middle are the foundation. The Data Harness sits on top of them: layered context the agent reads before generating code, a Knowledge Base of compiled dataset summaries, a Compute Engine that runs heavy work in parallel and survives failures, a Skill and MCP surface that lets Claude Code, Cursor, and Codex reach it the same way they reach a code repository. Each piece is rich enough for its own post:

- Recall economics - the four-to-five-order-of-magnitude gap between re-running an LLM pass and reading a typed result.

- Checkpoint-recoverable pipelines - so a failure two-thirds through a $5,000 enrichment does not start over.

- Compiled knowledge - finite-token dataset pages, so agents do not pull raw chunks and hallucinate columns.

- Skill and MCP wiring - so the existing agent surface reaches this layer without a new tool.

None of these works without the foundation - which is why we walked through the boring four first.

DataChain is how we ship this - the four primitives, the database in the middle, the Compute Engine on top, all in one Python library, bucket as source of truth. We are sharing how we build it because we are still figuring some of it out and want to hear how others are doing this. If you have questions, disagreements, or your own version, please reach out.